Lane Detection

Project for the Udacity Self-Driving Car Nanodegree

This is my submission for the Udacity Self-Driving Car Nanodegree Lane Detection Project. You can find my code in this Jupyter Notebook.

Summary

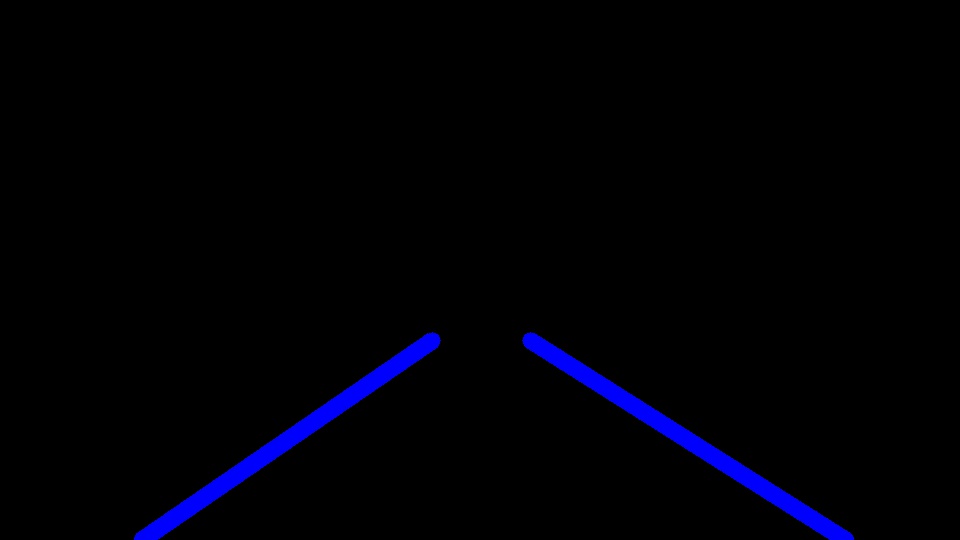

The pipeline takes a video of a street as input and outputs it with the lane markers highlighted.

Finding Lane Lines on the Road

The goals / steps of this project are the following:

- Make a pipeline that finds lane lines on the road

- Reflect on your work in a written report

The Lane Line Detection Pipeline

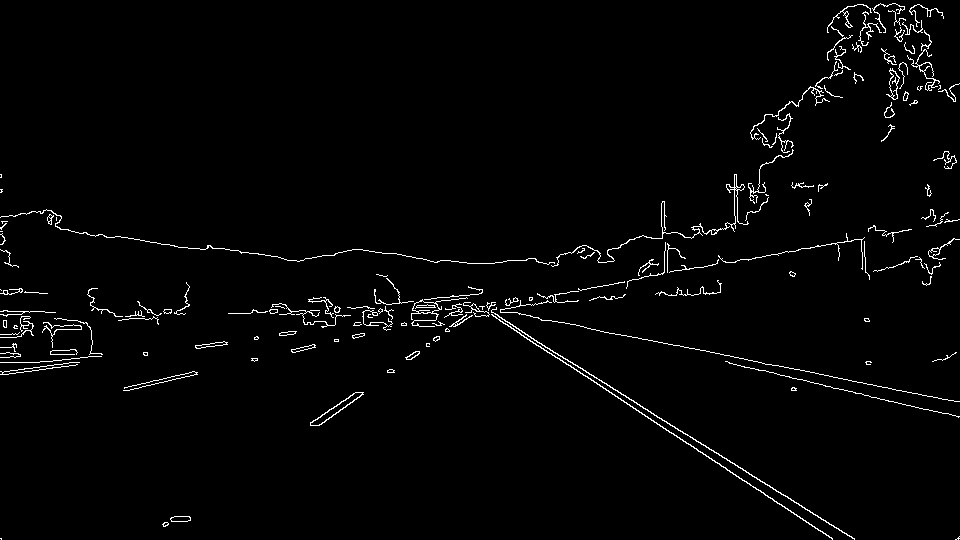

My pipeline consisted of 5 steps:

- Convert the images to grayscale

- Apply Gaussian smoothing in order to reduce the noise in the image

- Detect edges using canny edge detection algorithm

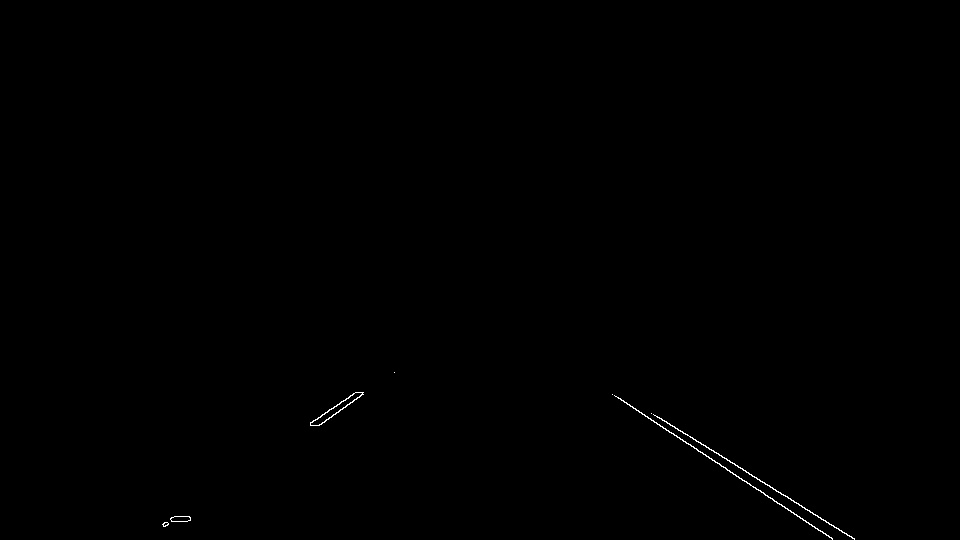

- Select the region of interest by applying a mask

- Apply the hough transformation in order to identify the lines

In order to draw the left and right lane lines, I modified the draw_lines() function by

- Assign the lines from the hough transformation to the right or left lane by analyzing the slope.

- Filtering out lines that are not lane lines. Only lines with a slope between 0.5 and 2 are considered as potential lane lines

- Extrapolation of the lines to the full length betwwen the bottom of the image and the horizon

- Calculate the x value of the bottom and top end of the lane line by calculation of the median value of the set of right or left hough lines.

- In order to handle situations where the pipeline is not able to produce a line line we simply use the precvious lane line coordinates.

Results

Identify potential shortcomings with your current pipeline

One potential shortcoming would be that the pipeline draws straight lines which obviously cannot follow curves.

Another shortcoming could be that the algorithm in this pipeline uses coordinates of previous lane lines in case it is not able to detect the lane lines from the current image. For a real self driving car, this would be very dangerous, because the car would still think that it “sees” lane lines even though it is already driven off the road.

Suggest possible improvements to your pipeline

A possible improvement would be to consider the position of the lane lines in previous images in order to validate and influence the calculation of the current results. Since the speed of the car is limited, we can assume that the street doesn’t change dramatically between two images. This could also trembling lane lines in the video.

Another improvement could be the integration of some algorithm that configures the parameters of the pipeline according to the quality and needs of the input video.